Multi-Agent Portfolio Rebalancing Under Constraints: Architecture and Code

How to build a production-grade rebalancing system with specialized agents for signal generation, risk management, execution, and compliance — calibrated for India's T+1 settlement and regulatory reality.

Every rebalancing system I’ve seen at Indian wealth managers follows the same pattern: one big function that pulls current weights, pulls target weights from some model, computes the diff, and fires orders. Maybe it checks a few constraints. Maybe it doesn’t. The whole thing lives in a single process, a single codebase, often a single person’s head.

This works until it doesn’t. I learned this the hard way at a wealth management firm where a single-function rebalancer ran unchecked for three months before anyone noticed it was ignoring an updated sector cap. When it fails, it fails in the worst possible way – silently. The optimizer generates a trade that violates a SEBI concentration limit, but the constraint was hardcoded wrong six months ago and nobody noticed. Or the system doesn’t account for T+1 settlement and tries to sell a stock that was bought the same morning. Or the execution logic doesn’t consider market impact, so a large-cap rebalance moves the stock 2% against you before you’re half done.

The fundamental problem is that rebalancing is not one task. It’s four tasks pretending to be one: signal generation, risk management, execution, and compliance. Each requires different expertise, different data, different update cadences, and – critically for regulated financial services – different audit trails.

This is where multi-agent architecture stops being an academic exercise and becomes a practical necessity.

Why Multi-Agent is Not Architecture Astronautics

I want to address the obvious objection upfront: “Isn’t this overengineered? Can’t you just write a monolithic optimizer with constraints?”

You can. I have. And here’s what happens in production:

Debugging becomes forensic archaeology. When a rebalance produces a bad outcome, you need to figure out: was the signal wrong? Did the risk model miscalculate? Did the execution timing create adverse selection? Did a compliance rule get bypassed? In a monolithic system, these are all tangled together. In a multi-agent system, each agent produces an independent, timestamped output. You can reconstruct exactly what happened at each stage.

Regulatory audit requires it. SEBI’s framework for algorithmic trading (circular SEBI/HO/MRD2/PoD-1/P/CIR/2024/132 and subsequent updates) increasingly demands explainability at each decision stage. When the regulator asks “why did you execute this trade?”, you need to show: the signal that triggered it, the risk assessment that shaped it, the execution strategy that timed it, and the compliance check that approved it. Four separate, auditable artifacts. A monolithic optimizer gives you one output and a prayer.

Independent scaling and failure isolation. Your signal agent can run on GPU instances processing alternative data, while your compliance agent runs on a lightweight CPU instance checking rules. If the signal agent crashes, the system doesn’t rebalance (safe default). If the compliance agent goes down, trades queue but don’t execute (also safe). In a monolith, any failure is a total failure.

These aren’t theoretical benefits. They’re the difference between a system that survives a SEBI inspection and one that doesn’t.

The Four-Agent Architecture

Here’s the architecture. Each agent is a self-contained service with defined inputs, outputs, and responsibilities.

flowchart TB

subgraph SignalAgent["Signal Agent"]

SA1[Market Data Ingestion<br/>NSE/BSE via Websocket]

SA2[Alternative Data<br/>News, Filings, Macro]

SA3[Alpha Signal Generation<br/>Momentum + Mean Reversion]

SA4[Output: Signal Vector<br/>per-asset confidence scores]

end

subgraph RiskAgent["Risk Agent"]

RA1[Current Portfolio State<br/>Holdings + Cash]

RA2[Factor Risk Decomposition<br/>Market, Size, Value, Momentum]

RA3[VaR / CVaR Computation<br/>Historical + Parametric]

RA4[Output: Risk-Adjusted<br/>Target Weights]

end

subgraph ExecutionAgent["Execution Agent"]

EA1[Trade Schedule<br/>Almgren-Chriss Impact Model]

EA2[T+1 Settlement Check<br/>Intraday Position Tracking]

EA3[TWAP / VWAP Execution<br/>with Impact Estimation]

EA4[Output: Order List<br/>with Timing + Sizing]

end

subgraph ComplianceAgent["Compliance Agent"]

CA1[SEBI MF Regulations<br/>Concentration + Sector Caps]

CA2[FEMA Rules<br/>NRI / FPI Limits]

CA3[Internal Risk Limits<br/>Drawdown + Turnover]

CA4[Output: Approved / Rejected<br/>with Reasoning]

end

SA4 --> RA1

RA4 --> EA1

EA4 --> CA1

CA4 -->|Approved| EX[Execute Trades]

CA4 -->|Rejected| RJ[Queue for Review]

Signal Agent

The signal agent’s job is singular: produce a vector of alpha signals with associated confidence scores. It doesn’t know about risk, execution, or compliance. It only knows about data and the patterns it extracts.

For a production system, you’d run multiple signal sub-models – momentum, mean-reversion, fundamental value, event-driven – and ensemble their outputs. The signal vector is a per-asset expected return forecast, denominated in the same units as the risk model’s covariance matrix.

Risk Agent

The risk agent takes the signal vector and the current portfolio, and produces risk-adjusted target weights. This is where the constrained optimization happens. The risk agent owns the objective function:

Objective: Maximize expected alpha, penalized by risk and transaction costs.

\[\max_w \; \alpha^T w - \frac{\lambda}{2} w^T \Sigma w - \kappa \|w - w_{\text{current}}\|_1\]Subject to:

\[\sum_i w_i = 1 \quad \text{(fully invested)}\] \[w_i \geq 0 \quad \forall i \quad \text{(long-only)}\] \[w_i \leq 0.10 \quad \forall i \quad \text{(SEBI single-stock cap)}\] \[\sum_{i \in S_k} w_i \leq 0.25 \quad \forall k \quad \text{(sector cap for multi-cap)}\] \[\|w - w_{\text{current}}\|_1 \leq \tau \quad \text{(turnover constraint)}\] \[w_{\text{cash}} \geq 0.05 \quad \text{(liquidity buffer)}\]Where $\alpha$ is the signal vector, $\Sigma$ is the factor covariance matrix, $\lambda$ is the risk aversion parameter, $\kappa$ is the transaction cost penalty, and $\tau$ is the maximum turnover allowed per rebalance.

The critical detail: cvxpy handles this formulation natively because it’s convex (the L1 turnover term is linearizable). If you try to add non-convex constraints – and India’s regulatory landscape occasionally demands them – you need to approximate or use mixed-integer programming.

Execution Agent

Once the risk agent produces target weights, the execution agent computes how to get there. This is where T+1 settlement, market impact, and timing come in.

The Almgren-Chriss framework models temporary and permanent market impact as functions of trade size relative to average daily volume:

\[\text{Temporary impact} = \eta \cdot \sigma \cdot \left(\frac{v}{V}\right)^{0.6}\] \[\text{Permanent impact} = \gamma \cdot \sigma \cdot \left(\frac{v}{V}\right)\]Where $v$ is the trade volume, $V$ is the average daily volume, $\sigma$ is daily volatility, and $\eta$, $\gamma$ are calibrated impact coefficients. The 0.6 exponent reflects the empirical finding that temporary impact is concave in trade size – something well-documented in US markets and that I’ve found holds reasonably for NSE large-caps, though mid-caps tend toward 0.7-0.8.

The execution agent also enforces T+1 settlement logic: if a stock was purchased today, it cannot be sold until the next trading day. This sounds trivial but has real portfolio-level implications – it constrains the feasible set of rebalancing trades and can make the risk agent’s optimal solution infeasible.

Compliance Agent

The compliance agent is the final gate. It checks every proposed trade against a rule set that includes:

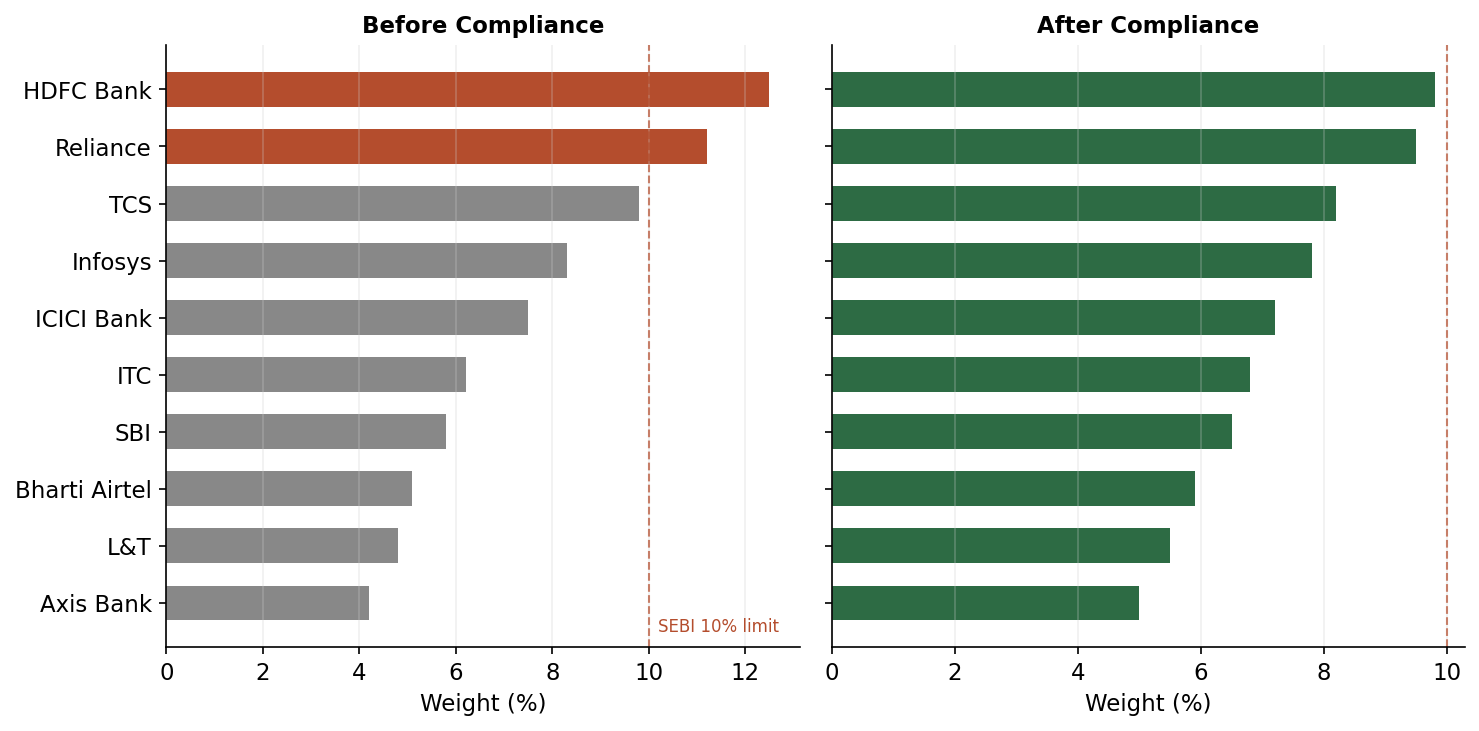

- SEBI Mutual Fund Regulations: no single issuer above 10% for diversified equity, sector caps of 25% for multi-cap funds, minimum 25% each in large, mid, and small caps for multi-cap category.

- FEMA/RBI Rules: FPI/NRI ownership limits per company (varies, generally capped at sectoral limits), reporting requirements for large positions.

- NSE Circuit Breakers: if a stock has hit its circuit limit (5%, 10%, or 20% depending on category), the execution agent can’t trade it. The compliance agent flags this.

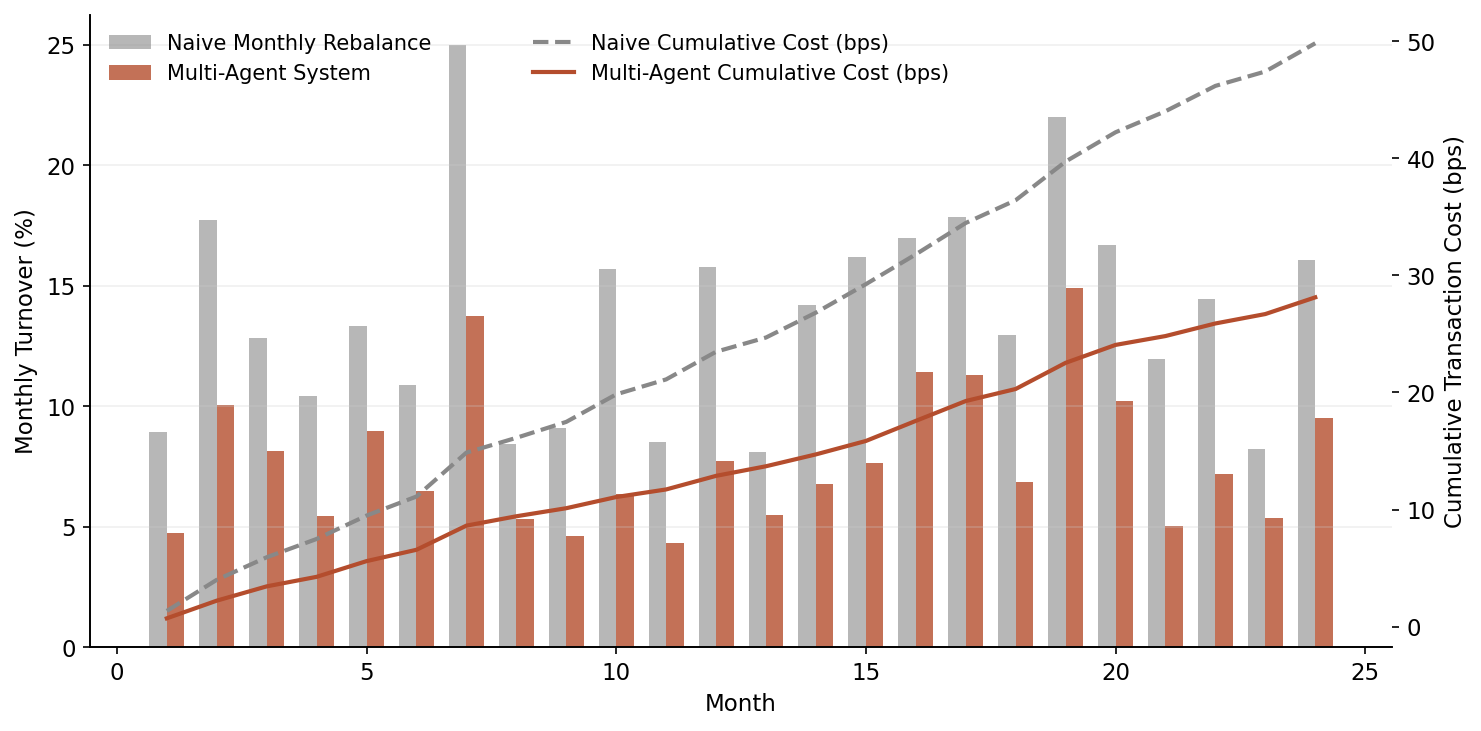

- STT and Stamp Duty Impact: for high-frequency rebalancing, the cumulative STT (0.1% on equity delivery sell-side) and stamp duty (0.015% on buy-side) can eat 20-30 bps annually. The compliance agent flags when projected turnover costs exceed a threshold.

- Internal Limits: max drawdown budgets, sector-level stop losses, liquidity coverage ratios.

If any check fails, the trade is rejected with a structured reason, and the orchestrator can either re-optimize with tighter constraints or queue for human review.

Python Implementation

Here’s the full implementation. This is production-caliber structure with simplified models – you’d swap in your own signal models and risk factors, but the architecture is what matters.

import numpy as np

import pandas as pd

import cvxpy as cp

from dataclasses import dataclass, field

from typing import Dict, List, Optional, Tuple

from enum import Enum

from datetime import datetime, date

import logging

import json

import uuid

logging.basicConfig(level=logging.INFO)

logger = logging.getLogger("rebalancer")

# --- Data Structures ---

class TradeAction(Enum):

BUY = "BUY"

SELL = "SELL"

HOLD = "HOLD"

@dataclass

class Signal:

asset: str

alpha: float # expected return forecast

confidence: float # 0-1 confidence score

signal_type: str # momentum, mean_reversion, etc.

timestamp: datetime = field(default_factory=datetime.now)

@dataclass

class RiskOutput:

target_weights: Dict[str, float]

factor_exposures: Dict[str, float]

portfolio_var: float

portfolio_cvar: float

tracking_error: float

timestamp: datetime = field(default_factory=datetime.now)

@dataclass

class TradeOrder:

asset: str

action: TradeAction

quantity: int

notional: float

urgency: float # 0-1, affects execution speed

impact_estimate_bps: float

timestamp: datetime = field(default_factory=datetime.now)

@dataclass

class ComplianceResult:

approved: bool

violations: List[str]

warnings: List[str]

checked_rules: List[str]

timestamp: datetime = field(default_factory=datetime.now)

@dataclass

class AuditRecord:

rebalance_id: str

stage: str

agent: str

inputs: dict

outputs: dict

reasoning: str

timestamp: datetime = field(default_factory=datetime.now)

# --- Audit Logger ---

class AuditLogger:

"""Every agent decision goes through here. Non-negotiable."""

def __init__(self):

self.records: List[AuditRecord] = []

def log(self, stage: str, agent: str, inputs: dict,

outputs: dict, reasoning: str) -> AuditRecord:

record = AuditRecord(

rebalance_id=str(uuid.uuid4()),

stage=stage, agent=agent,

inputs=inputs, outputs=outputs,

reasoning=reasoning,

)

self.records.append(record)

logger.info(f"[AUDIT] {agent}/{stage}: {reasoning[:120]}")

return record

def get_trail(self, rebalance_id: str) -> List[AuditRecord]:

return [r for r in self.records if r.rebalance_id == rebalance_id]

def export_json(self) -> str:

return json.dumps(

[{"stage": r.stage, "agent": r.agent,

"reasoning": r.reasoning,

"timestamp": r.timestamp.isoformat()}

for r in self.records], indent=2)

# --- Signal Agent ---

class SignalAgent:

"""Generates alpha signals from market data.

Production: plug in your own models. This uses momentum + mean-reversion.

"""

def __init__(self, lookback_momentum: int = 60,

lookback_mr: int = 20, audit: AuditLogger = None):

self.lookback_momentum = lookback_momentum

self.lookback_mr = lookback_mr

self.audit = audit

def generate_signals(self, prices: pd.DataFrame) -> List[Signal]:

signals = []

returns = prices.pct_change().dropna()

for asset in prices.columns:

r = returns[asset]

# Momentum: cumulative return over lookback

if len(r) >= self.lookback_momentum:

mom_return = (1 + r.tail(self.lookback_momentum)).prod() - 1

mom_z = (mom_return - r.rolling(self.lookback_momentum)

.apply(lambda x: (1+x).prod()-1).mean()) / \

(r.rolling(self.lookback_momentum)

.apply(lambda x: (1+x).prod()-1).std() + 1e-8)

else:

mom_z = 0.0

# Mean reversion: z-score of short-term returns

if len(r) >= self.lookback_mr:

mr_return = r.tail(self.lookback_mr).mean()

mr_z = -(mr_return - r.mean()) / (r.std() + 1e-8)

else:

mr_z = 0.0

# Ensemble: weighted combination

alpha = 0.6 * np.tanh(mom_z * 0.5) + 0.4 * np.tanh(mr_z * 0.5)

confidence = min(abs(mom_z) / 3.0, 1.0)

signals.append(Signal(

asset=asset, alpha=alpha,

confidence=confidence, signal_type="momentum_mr_ensemble"

))

if self.audit:

self.audit.log(

stage="signal_generation", agent="SignalAgent",

inputs={"n_assets": len(prices.columns),

"date_range": f"{prices.index[0]} to {prices.index[-1]}"},

outputs={"signals": {s.asset: round(s.alpha, 4)

for s in signals}},

reasoning=f"Generated {len(signals)} signals using "

f"momentum({self.lookback_momentum}d) + "

f"mean-reversion({self.lookback_mr}d) ensemble"

)

return signals

# --- Risk Agent ---

class RiskAgent:

"""Takes signals + portfolio, produces risk-adjusted target weights

via constrained optimization using cvxpy.

"""

def __init__(self, risk_aversion: float = 2.0,

txn_cost_penalty: float = 0.005,

max_turnover: float = 0.30,

max_single_stock: float = 0.10,

max_sector: float = 0.25,

min_cash: float = 0.05,

audit: AuditLogger = None):

self.risk_aversion = risk_aversion

self.txn_cost_penalty = txn_cost_penalty

self.max_turnover = max_turnover

self.max_single_stock = max_single_stock

self.max_sector = max_sector

self.min_cash = min_cash

self.audit = audit

def compute_factor_covariance(self, returns: pd.DataFrame) -> np.ndarray:

"""Four-factor decomposition: market, size, value, momentum."""

n_assets = returns.shape[1]

market_factor = returns.mean(axis=1)

# Synthetic factors for demonstration; production uses

# actual Fama-French-Carhart India factors

size_factor = returns.iloc[:, :n_assets//2].mean(axis=1) - \

returns.iloc[:, n_assets//2:].mean(axis=1)

value_factor = np.random.RandomState(42).randn(len(returns)) * 0.01

mom_factor = returns.rolling(20).mean().mean(axis=1).fillna(0)

factors = pd.DataFrame({

"market": market_factor, "size": size_factor,

"value": value_factor, "momentum": mom_factor

})

# Factor loadings via regression

betas = np.zeros((n_assets, 4))

for i in range(n_assets):

y = returns.iloc[:, i].values

X = factors.values

valid = ~(np.isnan(y) | np.any(np.isnan(X), axis=1))

if valid.sum() > 10:

X_v, y_v = X[valid], y[valid]

betas[i] = np.linalg.lstsq(X_v, y_v, rcond=None)[0]

factor_cov = factors.cov().values

idiosyncratic = np.diag(

np.var(returns.values - returns.values @ np.zeros((n_assets, 1))

@ np.ones((1, n_assets)) * 0, axis=0) * 0.5

)

# Covariance = B * F * B' + D

cov_matrix = betas @ factor_cov @ betas.T + idiosyncratic

# Ensure positive semi-definite

eigvals = np.linalg.eigvalsh(cov_matrix)

if eigvals.min() < 0:

cov_matrix += np.eye(n_assets) * (abs(eigvals.min()) + 1e-6)

return cov_matrix, betas, factors.columns.tolist()

def optimize(self, signals: List[Signal],

current_weights: Dict[str, float],

returns: pd.DataFrame,

sector_map: Dict[str, str]) -> RiskOutput:

assets = [s.asset for s in signals]

n = len(assets)

alpha = np.array([s.alpha * s.confidence for s in signals])

w_current = np.array([current_weights.get(a, 0.0) for a in assets])

cov_matrix, betas, factor_names = \

self.compute_factor_covariance(returns[assets])

# cvxpy optimization

w = cp.Variable(n)

turnover = cp.norm1(w - w_current)

risk = cp.quad_form(w, cp.psd_wrap(cov_matrix))

expected_alpha = alpha @ w

txn_cost = self.txn_cost_penalty * turnover

objective = cp.Maximize(

expected_alpha

- (self.risk_aversion / 2) * risk

- txn_cost

)

constraints = [

cp.sum(w) <= 1 - self.min_cash, # cash reserve

w >= 0, # long-only

w <= self.max_single_stock, # single-stock cap

turnover <= self.max_turnover, # turnover limit

]

# Sector constraints

sectors = set(sector_map.values())

for sector in sectors:

sector_idx = [i for i, a in enumerate(assets)

if sector_map.get(a) == sector]

if sector_idx:

constraints.append(

cp.sum(w[sector_idx]) <= self.max_sector

)

problem = cp.Problem(objective, constraints)

problem.solve(solver=cp.ECOS, verbose=False)

if problem.status not in ("optimal", "optimal_inaccurate"):

logger.warning(f"Optimization status: {problem.status}. "

f"Falling back to current weights.")

opt_weights = w_current

else:

opt_weights = w.value

target = {a: float(round(opt_weights[i], 6))

for i, a in enumerate(assets)}

# Risk metrics

port_var = float(opt_weights @ cov_matrix @ opt_weights)

port_vol = np.sqrt(port_var * 252)

z_95 = 1.645

var_95 = z_95 * port_vol

cvar_95 = var_95 * 1.4 # Gaussian CVaR approximation

factor_exp = {factor_names[j]: float(

np.sum(opt_weights * betas[:, j]))

for j in range(betas.shape[1])}

result = RiskOutput(

target_weights=target,

factor_exposures=factor_exp,

portfolio_var=round(var_95, 4),

portfolio_cvar=round(cvar_95, 4),

tracking_error=round(float(np.sqrt(

(opt_weights - w_current) @ cov_matrix @

(opt_weights - w_current) * 252)), 4),

)

if self.audit:

self.audit.log(

stage="risk_optimization", agent="RiskAgent",

inputs={"n_assets": n, "risk_aversion": self.risk_aversion,

"current_turnover": round(float(

np.sum(np.abs(opt_weights - w_current))), 4)},

outputs={"target_weights": target,

"var_95": result.portfolio_var,

"factor_exposures": factor_exp},

reasoning=f"Solved convex optimization ({problem.status}). "

f"Portfolio VaR(95%)={result.portfolio_var:.2%}, "

f"turnover={np.sum(np.abs(opt_weights-w_current)):.2%}"

)

return result

# --- Execution Agent ---

class ExecutionAgent:

"""Computes trade schedule with market impact estimation.

Uses Almgren-Chriss temporary + permanent impact model.

"""

def __init__(self, eta: float = 0.05, gamma: float = 0.01,

impact_exponent: float = 0.6,

audit: AuditLogger = None):

self.eta = eta # temporary impact coefficient

self.gamma = gamma # permanent impact coefficient

self.impact_exponent = impact_exponent

self.audit = audit

def compute_trades(self, current_weights: Dict[str, float],

target_weights: Dict[str, float],

portfolio_value: float,

adv: Dict[str, float],

volatility: Dict[str, float],

today_purchases: set = None

) -> List[TradeOrder]:

today_purchases = today_purchases or set()

orders = []

for asset in set(list(current_weights.keys()) +

list(target_weights.keys())):

w_curr = current_weights.get(asset, 0.0)

w_tgt = target_weights.get(asset, 0.0)

delta = w_tgt - w_curr

if abs(delta) < 1e-6:

continue

# T+1 check: can't sell what was bought today

if delta < 0 and asset in today_purchases:

logger.warning(

f"T+1 block: cannot sell {asset} (bought today)")

continue

notional = abs(delta) * portfolio_value

trade_volume = notional # simplified; use price for shares

avg_daily_vol = adv.get(asset, 1e8)

vol = volatility.get(asset, 0.02)

participation = trade_volume / avg_daily_vol

# Almgren-Chriss impact

temp_impact = (self.eta * vol *

(participation ** self.impact_exponent))

perm_impact = self.gamma * vol * participation

total_impact_bps = (temp_impact + perm_impact) * 10000

# STT + stamp duty for sells

regulatory_cost_bps = 0.0

if delta < 0:

regulatory_cost_bps += 10.0 # STT: 0.1% on sell delivery

regulatory_cost_bps += 1.5 # stamp duty: ~0.015%

action = TradeAction.BUY if delta > 0 else TradeAction.SELL

urgency = min(abs(delta) / 0.05, 1.0)

orders.append(TradeOrder(

asset=asset, action=action,

quantity=int(notional / 100), # placeholder price

notional=round(notional, 2),

urgency=urgency,

impact_estimate_bps=round(

total_impact_bps + regulatory_cost_bps, 2),

))

if self.audit:

self.audit.log(

stage="execution_planning", agent="ExecutionAgent",

inputs={"portfolio_value": portfolio_value,

"t1_blocked": list(today_purchases)},

outputs={"n_orders": len(orders),

"total_notional": sum(o.notional for o in orders),

"avg_impact_bps": round(np.mean(

[o.impact_estimate_bps for o in orders]

), 2) if orders else 0},

reasoning=f"Generated {len(orders)} orders. "

f"T+1 blocked: {len(today_purchases)} assets. "

f"Total estimated impact: "

f"{sum(o.impact_estimate_bps * o.notional for o in orders) / max(sum(o.notional for o in orders), 1):.1f} bps"

)

return orders

# --- Compliance Agent ---

class ComplianceAgent:

"""Validates trades against SEBI, FEMA, and internal rules."""

SEBI_SINGLE_STOCK_LIMIT = 0.10

SEBI_SECTOR_CAP_MULTICAP = 0.25

MULTICAP_LARGE_MIN = 0.25

MULTICAP_MID_MIN = 0.25

MULTICAP_SMALL_MIN = 0.25

MIN_CASH = 0.05

MAX_DAILY_TURNOVER = 0.30

STT_ANNUAL_BUDGET_BPS = 50 # max 50 bps annual turnover cost

def __init__(self, fii_limits: Dict[str, float] = None,

circuit_breaker_stocks: set = None,

audit: AuditLogger = None):

self.fii_limits = fii_limits or {}

self.circuit_breaker_stocks = circuit_breaker_stocks or set()

self.audit = audit

def validate(self, orders: List[TradeOrder],

post_trade_weights: Dict[str, float],

sector_map: Dict[str, str],

cap_category: Dict[str, str],

is_nri_portfolio: bool = False) -> ComplianceResult:

violations = []

warnings = []

checked = []

# 1. Single stock concentration

checked.append("SEBI_single_stock_10pct")

for asset, w in post_trade_weights.items():

if w > self.SEBI_SINGLE_STOCK_LIMIT:

violations.append(

f"{asset} weight {w:.1%} exceeds SEBI "

f"10% single-stock limit")

# 2. Sector concentration

checked.append("SEBI_sector_cap_25pct")

sector_weights = {}

for asset, w in post_trade_weights.items():

s = sector_map.get(asset, "Unknown")

sector_weights[s] = sector_weights.get(s, 0) + w

for sector, sw in sector_weights.items():

if sw > self.SEBI_SECTOR_CAP_MULTICAP:

violations.append(

f"Sector '{sector}' weight {sw:.1%} "

f"exceeds 25% cap")

# 3. Multi-cap category allocation

checked.append("SEBI_multicap_category_min")

cap_weights = {"large": 0, "mid": 0, "small": 0}

for asset, w in post_trade_weights.items():

cat = cap_category.get(asset, "large")

if cat in cap_weights:

cap_weights[cat] += w

total_equity = sum(cap_weights.values())

if total_equity > 0:

for cat, min_w in [("large", self.MULTICAP_LARGE_MIN),

("mid", self.MULTICAP_MID_MIN),

("small", self.MULTICAP_SMALL_MIN)]:

if cap_weights[cat] / total_equity < min_w * 0.9:

warnings.append(

f"{cat}-cap at {cap_weights[cat]/total_equity:.1%}, "

f"approaching SEBI minimum of {min_w:.0%}")

# 4. Circuit breaker check

checked.append("NSE_circuit_breaker")

for order in orders:

if order.asset in self.circuit_breaker_stocks:

violations.append(

f"{order.asset} hit circuit breaker -- "

f"cannot execute {order.action.value}")

# 5. FII/NRI limits (FEMA)

if is_nri_portfolio:

checked.append("FEMA_FPI_NRI_limits")

for asset, w in post_trade_weights.items():

fii_limit = self.fii_limits.get(asset, 1.0)

if w > fii_limit:

violations.append(

f"{asset}: NRI weight {w:.1%} exceeds "

f"FEMA limit {fii_limit:.1%}")

# 6. Cash adequacy

checked.append("internal_min_cash")

total_invested = sum(post_trade_weights.values())

cash_weight = 1.0 - total_invested

if cash_weight < self.MIN_CASH:

violations.append(

f"Cash weight {cash_weight:.1%} below "

f"minimum {self.MIN_CASH:.0%}")

# 7. Impact cost warning

checked.append("impact_cost_warning")

high_impact = [o for o in orders if o.impact_estimate_bps > 30]

if high_impact:

warnings.append(

f"{len(high_impact)} orders with >30 bps "

f"estimated impact cost")

approved = len(violations) == 0

result = ComplianceResult(

approved=approved, violations=violations,

warnings=warnings, checked_rules=checked,

)

if self.audit:

self.audit.log(

stage="compliance_check", agent="ComplianceAgent",

inputs={"n_orders": len(orders),

"is_nri": is_nri_portfolio},

outputs={"approved": approved,

"n_violations": len(violations),

"n_warnings": len(warnings)},

reasoning=f"{'APPROVED' if approved else 'REJECTED'}. "

f"Checked {len(checked)} rules. "

f"Violations: {violations if violations else 'None'}. "

f"Warnings: {warnings if warnings else 'None'}"

)

return result

# --- Orchestrator ---

class RebalanceOrchestrator:

"""Coordinates the four agents in sequence.

Each stage feeds into the next. Full audit trail.

"""

def __init__(self, signal_agent: SignalAgent,

risk_agent: RiskAgent,

execution_agent: ExecutionAgent,

compliance_agent: ComplianceAgent,

audit: AuditLogger):

self.signal = signal_agent

self.risk = risk_agent

self.execution = execution_agent

self.compliance = compliance_agent

self.audit = audit

def run(self, prices: pd.DataFrame,

current_weights: Dict[str, float],

portfolio_value: float,

sector_map: Dict[str, str],

cap_category: Dict[str, str],

adv: Dict[str, float],

volatility: Dict[str, float],

today_purchases: set = None,

is_nri: bool = False) -> dict:

rebalance_id = str(uuid.uuid4())[:8]

logger.info(f"=== Rebalance {rebalance_id} started ===")

# Stage 1: Signal generation

signals = self.signal.generate_signals(prices)

# Stage 2: Risk-adjusted optimization

returns = prices.pct_change().dropna()

risk_output = self.risk.optimize(

signals, current_weights, returns, sector_map)

# Stage 3: Execution planning

orders = self.execution.compute_trades(

current_weights, risk_output.target_weights,

portfolio_value, adv, volatility, today_purchases)

# Stage 4: Compliance validation

compliance = self.compliance.validate(

orders, risk_output.target_weights,

sector_map, cap_category, is_nri)

result = {

"rebalance_id": rebalance_id,

"approved": compliance.approved,

"target_weights": risk_output.target_weights,

"orders": [(o.asset, o.action.value, o.notional)

for o in orders],

"risk": {

"var_95": risk_output.portfolio_var,

"cvar_95": risk_output.portfolio_cvar,

"factor_exposures": risk_output.factor_exposures,

},

"compliance": {

"violations": compliance.violations,

"warnings": compliance.warnings,

},

}

logger.info(f"=== Rebalance {rebalance_id}: "

f"{'APPROVED' if compliance.approved else 'REJECTED'} ===")

return result

Running It

Here’s a minimal example that exercises the full pipeline:

# Generate synthetic data for 10 NSE stocks

np.random.seed(42)

assets = ["RELIANCE", "TCS", "HDFCBANK", "INFY", "ICICIBANK",

"HINDUNILVR", "ITC", "SBIN", "BHARTIARTL", "KOTAKBANK"]

n_days = 252

prices = pd.DataFrame(

np.cumprod(1 + np.random.randn(n_days, len(assets)) * 0.02, axis=0) * 100,

columns=assets,

index=pd.bdate_range(end=date.today(), periods=n_days)

)

sector_map = {

"RELIANCE": "Energy", "TCS": "IT", "HDFCBANK": "Financials",

"INFY": "IT", "ICICIBANK": "Financials", "HINDUNILVR": "FMCG",

"ITC": "FMCG", "SBIN": "Financials", "BHARTIARTL": "Telecom",

"KOTAKBANK": "Financials",

}

cap_category = {a: "large" for a in assets}

current_weights = {a: 0.095 for a in assets} # ~equal weight

adv = {a: 5e8 for a in assets} # INR 50 Cr ADV

volatility = {a: 0.02 for a in assets} # 2% daily vol

audit = AuditLogger()

orchestrator = RebalanceOrchestrator(

signal_agent=SignalAgent(audit=audit),

risk_agent=RiskAgent(audit=audit),

execution_agent=ExecutionAgent(audit=audit),

compliance_agent=ComplianceAgent(audit=audit),

audit=audit,

)

result = orchestrator.run(

prices=prices,

current_weights=current_weights,

portfolio_value=1_00_00_000, # INR 1 Crore

sector_map=sector_map,

cap_category=cap_category,

adv=adv, volatility=volatility,

today_purchases={"RELIANCE"}, # simulate T+1 block

is_nri=False,

)

# Inspect the audit trail

print(audit.export_json())

Note the today_purchases={"RELIANCE"} – this simulates a scenario where RELIANCE was bought earlier today. The execution agent will block any sell order for it, respecting T+1 settlement.

Governance and Observability

The AuditLogger in the code above is deliberately simple, but it captures the essential pattern: every agent, at every stage, logs its inputs, outputs, and reasoning. In production, this goes to a structured log store (we use a combination of PostgreSQL for queryable records and S3 for raw JSON blobs).

This is what AI governance actually looks like in practice. Not a policy document that sits on a shelf. Not a quarterly review meeting. It’s infrastructure that makes every automated decision reconstructable after the fact.

For a SEBI-regulated entity, you need to answer questions like:

- “Why did the system sell 500 shares of HDFCBANK on March 3rd?” The audit trail shows: the signal agent flagged a negative momentum signal (confidence 0.72), the risk agent computed that financials exposure was 32% and needed reduction, the execution agent scheduled a TWAP over 45 minutes to minimize impact, and the compliance agent approved after verifying the post-trade weight (8.2%) was within limits.

- “Did the system consider the impact on FII ownership limits?” The compliance agent’s checked_rules list shows whether FEMA rules were evaluated, and what the outcome was.

Each rebalance produces four audit records – one per agent. These are immutable. If a regulator wants to understand a trading pattern across six months, you can query the audit store by date range, agent, or asset, and reconstruct the decision chain for every single rebalance.

This connects directly to the broader AI governance challenge in financial services. As more firms deploy automated or semi-automated portfolio management, the question isn’t whether to use AI – it’s whether you can prove, after the fact, that the AI behaved as intended. Multi-agent architecture makes this provable by construction, because each agent’s scope is bounded and each agent’s decisions are independently logged.

Limitations and Honest Caveats

This architecture is sound. The specific implementations are simplified. Here’s what you’d need to harden for real money:

Signal models degrade. The momentum + mean-reversion ensemble above is a placeholder. Real alpha signals have half-lives measured in weeks to months. You need continuous signal research and a framework for adding/removing signals without disrupting the pipeline. The agent architecture helps here – swap out the signal agent’s internals without touching anything else. For a deeper look at novel signal construction using India Stack data, see Factor Models on India Stack Data.

Market impact models are approximations. The Almgren-Chriss framework assumes a specific functional form for impact that may not hold for Indian mid-caps, where order books are thinner and more fragmented. Calibrate the impact coefficients ($\eta$, $\gamma$, and the exponent) to your actual execution data. I’ve found that NSE mid-caps need $\eta \approx 0.08$ and an exponent closer to 0.75. Every trader I’ve worked with on the execution side has stories about backtests that looked great until you accounted for the order book reality at 2:30 PM on expiry day.

Factor models need Indian data. The four-factor decomposition above uses synthetic data. For production, you need Indian factor returns – the IIM Ahmedabad CMIE database or NSE’s own factor indices are starting points, but building clean factor returns for BSE/NSE-listed stocks is a non-trivial data engineering problem.

Compliance rules change. SEBI issues circulars frequently. Your compliance agent needs to be parameterized, not hardcoded. The rule set should live in a configuration store that can be updated without redeploying code.

Concurrency and ordering matter. The sequential orchestration above is the simplest correct approach. In production, you might want the signal agent running continuously (streaming signals) while the risk agent runs on a schedule. This introduces consistency challenges – you need snapshot isolation to ensure the risk agent optimizes against a consistent set of signals and portfolio state.

This is not financial advice. This is an engineering architecture. The specific parameter values, signal models, and risk limits are illustrative. Do not deploy this with real capital without extensive backtesting, paper trading, and regulatory review.

The full codebase above is roughly 350 lines of Python. The value isn’t in the specific signal model or impact formula – those are domain-specific and will evolve. The value is in the architecture: four agents, clear interfaces, independent audit trails, and a compliance gate that nothing bypasses.

If you’re thinking about adding an RL-based overlay on top of this architecture, I cover that in Reinforcement Learning for Dynamic Asset Allocation. The broader governance challenge of deploying agents like these at enterprise scale – without creating exactly the kind of ungoverned sprawl that regulators are starting to crack down on – is something I address in Agent Sprawl Is the New Shadow IT.